My Startup Sabbatical Led Me To Update My Berkeley CS Class

I’m a teaching professor at UC Berkeley in the department of Electrical Engineering and Computer Sciences, though recently I’ve been devoting some of my time to helping build a startup called PerfectRec. As this is my first industry experience, I’ve learned a ton, and it’s got me thinking about how I might introduce more real-world engineering practices into my courses. I’d love to hear your feedback on my initial idea.

The course I teach most often is a 1,500-student data structures class that’s typically taken by freshmen and sophomores. While the course is primarily about CS fundamentals, the class also serves as a basic introduction to software engineering. For example, we teach unit testing, test driven development, and version control. One possibility for improvement is to provide guidance on team workflows. The course has a final project done in teams of 2 or 3, but student approaches are typically quite naive, e.g. team members push to their main branch with no regression testing or code review.

At PerfectRec, I’ve seen better ways to do things, having been the head of machine learning, a data scientist, and more recently a software engineer. We’re building a recommendation system, first focusing on consumer electronics such as TVs, phones, and laptops. We ask users multiple-choice questions and try to come up with the perfect suggestion. The long-term plan is to be able to recommend anything, even answers to bigger life questions, like where you should live or go to college. Fun! But getting people coordinated across three teams (web dev, ML dev, human experts) is challenging, especially at a fully remote company.

Inspired by workflows from PerfectRec, I’ve started to brainstorm a 90 minute lab assignment for next year that gives students a small taste of CI and trunk-based development by having all 1,500 students work on a single repo. It doesn’t really matter what they build, so I’ve chosen to have them implement Comparators, which they’ve already seen in the course. To make things a bit more interesting, the macguffin is that they’re participating in what I’m tentatively calling “The Lottery in Babylon” (a la Borges).

In the Lottery, each student will submit a unique integer via Google forms as their champion, giving us ~1,500 unique integers. Each student will also push a Comparator<Integer> of their design to the contest repository.

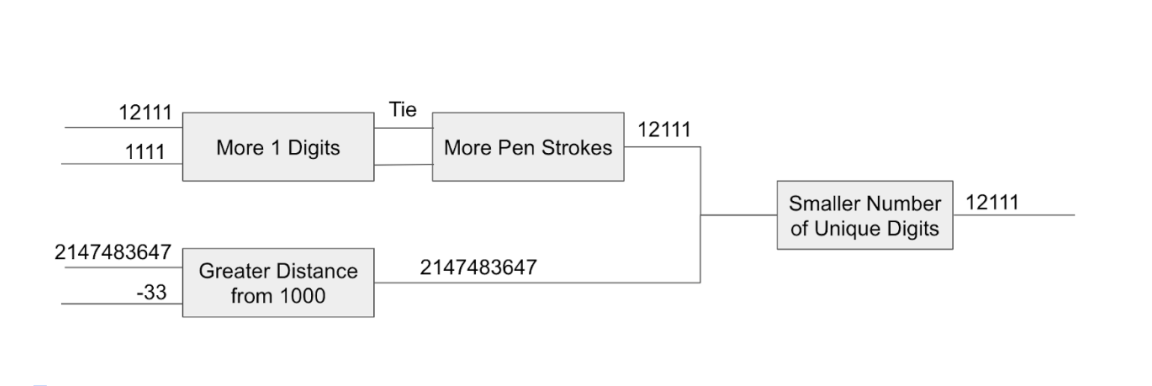

Once we have everyone’s integers and comparators, We’ll hold an 11 round, single elimination tournament of one-on-one matches until only one integer remains. Each of the matches will be judged using a randomly selected Comparator from a pool of 1,500 different comparators. In the case of a tie, we’ll hold a repeat match with another random comparator. An example is given below for the case of 4 entrants rather than the 1500 that we’ll use in the real assignment:

Each students comparator may do anything they want, so long as it is at least somewhat interesting and creates a deterministic total order, i.e. obeys what Knuth calls the “law of trichotomy” (exactly one of a < b, a = b, and a > b is true) and “law of transitivity” (if a < b and b < c, then a < c).

For example, one student might decide to implement a Comparator that compares two numbers based on how many 1s are in the number, e.g. by this example 11851 is less than 1111. Another might count the number of pencil “strokes” they think it takes to write the number in their own handwriting, e.g. 660 < 44. These Comparators are entirely up to the students so long as they result in a total order.

The chance of any student’s integer encountering their own Comparator is low, so students won’t benefit by creating an order where their own integer is the “biggest”. One open question is how to encourage more creativity, e.g. giving some recognition for especially unique comparators or setting up constraints per student so everyone doesn’t do the same thing.

To show off CI, every student will create their own short-lived branch and submit a pull request for their Comparator to the repo that will be used to run the lottery. We’ll use a Github Actions workflow to verify that their Comparator creates a total order.

To have their pull request approved, every student will have to have their code reviewed and approved by at least 3 reviewers, who will verify that the javadocs for the Comparator match the Comparator’s behavior. Every student will have to approve at least 3 other students’ submissions.

The code review and automated correctness tests are complementary to each other. The automated tests vouch that the Comparator defines a total order. The manual reviewer vouches that the Comparator behaves in the way the javadocs claim.

We’ll set things up so that they can’t modify any of the code used to run the contest, though I’m open to cool ideas about how we can potentially allow this.

Once we have all of the comparators and unique integers, we’ll run the tournament and find the winning integer. I haven’t yet thought of a suitable prize other than the satisfaction of knowing that you won against the odds in an infinite game of chance.

While this is a bit artificial, I think it’ll give a glimpse at how CI practices can be used to coordinate the actions of a vast number of developers, with the obvious shortcoming that each student’s code is all completely orthogonal in function. We can later build upon this experience and provide guidance for using these practices on the small team capstone project.

I’d love to hear your thoughts on this lab design. Is there anything you’d change or improve or other ways you might try to give freshmen and sophomores in my data structures class a taste of trunk-based development?

Email: josh@perfectrec.com

Stay up to date on new products

Get occasional updates about new product releases, interesting product news, and exciting PerfectRec features. No spam. We never share your email.